Now Reading: Unlocking AI in space: the case for greater industry and space agency collaboration

-

01

Unlocking AI in space: the case for greater industry and space agency collaboration

Unlocking AI in space: the case for greater industry and space agency collaboration

For decades, space has served as humanity’s most demanding testing laboratory, where only the most resilient technologies survive the vacuum, radiation and temperature extremes beyond Earth’s protective embrace. Today, we stand at an inflection point where artificial intelligence is poised to fundamentally transform how we explore, understand and operate in space. But making AI-powered space exploration a reality will depend on a cooperative ecosystem of hardware providers and space exploration agencies working together to develop, evaluate and de-risk space-rated solutions.

The opportunity is vast. From Earth observation satellites that must process terabytes of sensor data in real-time to Mars rovers making split-second navigation decisions millions of miles from human oversight, AI promises to unlock unprecedented autonomous capabilities across the space domain. Realizing this vision demands more than sophisticated algorithms. It requires hardware engineered to withstand the universe’s most unforgiving environments, where a single component failure can jeopardize a billion-euro mission.

Each phase of space exploration, from launch to deep space operations, presents distinct challenges that AI can uniquely address, including:

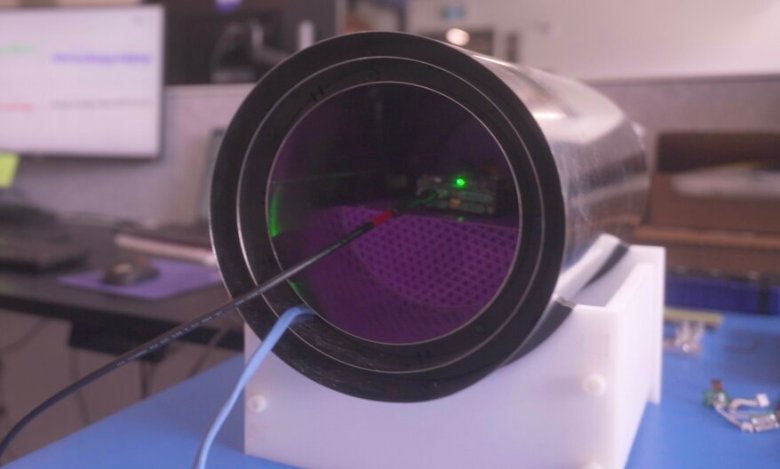

- Faster interpretation of image and sensor data: For planetary exploration and Earth observation — including weather and climate monitoring, as well as disaster response — edge-optimized AI could enable orbiting satellites to process, analyze and interpret high-resolution images and other data locally. They could then determine which data is the highest priority for transmission back to Earth. This would reduce the need to transmit large volumes of raw data and optimize the usage of limited communications bandwidth, while potentially improving the response time on Earth for weather emergencies or other disasters.

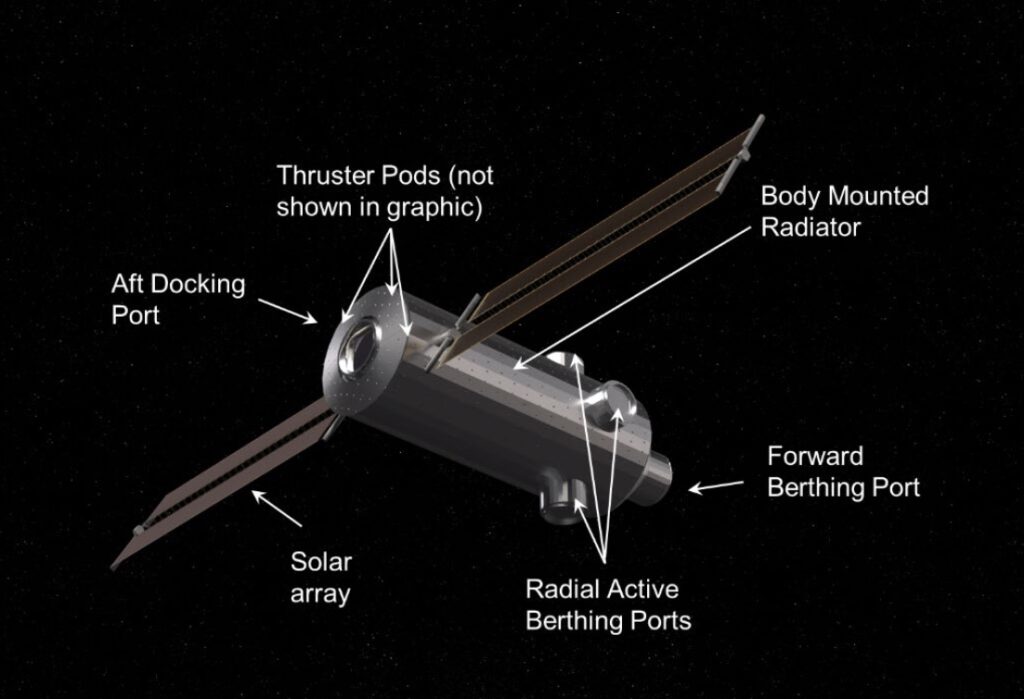

- Real-time autonomy and navigation: Constrained bandwidth and the delays in round-trip communication across the vast distances of space are major challenges for vehicle control and guidance, particularly when the unexpected occurs. Real-time AI inference could significantly improve the capabilities of space vehicles to maneuver independently, allowing them to avoid collisions or conduct autonomous docking operations (an increasingly important need as orbital space becomes more crowded with vehicles and debris). On-vehicle AI inference could also give planetary rovers the ability to detect and avoid objects in their path without ground control intervention.

- Vehicle health monitoring: AI could also be used to monitor onboard systems and predict potential failures well before a threshold is triggered. This could improve overall vehicle reliability, lifetime and performance.

Yet, between these promising applications and their widespread deployment lies a significant engineering challenge. The same environment that makes space the ultimate proving ground for technology also creates formidable obstacles for the AI hardware vendors and space agencies tasked with making these systems space-ready.

Unlike terrestrial data centers where processors operate in climate-controlled environments with redundant power supplies and human oversight, space-based AI hardware must function autonomously for years or even decades without maintenance or repair. The failure of a single stack of components during a mission cannot be resolved with a simple replacement. Even though space vehicles are designed for reliability — with double or even triple redundancy for critical systems — non-critical systems aren’t always as robust. A space science mission that relies on AI hardware that fails could compromise billions of euros in investment and years of scientific research.

This reality forces hardware designers to reconsider fundamental assumptions about processor architecture, manufacturing processes and system design. Traditional commercial AI chips optimized for maximum performance-per-watt must be reimagined for an environment where longevity, fault tolerance and radiation hardening take precedence over raw computational speed. The challenges are as diverse as they are demanding:

- Compute throughput: While some applications can be run effectively on lower-end hardware, demanding AI applications — such as real-time image processing — require high performance and compute throughput. AI chips will need to be more than just small and power-efficient; they will need enough processing power to run multi-modal models effectively. Furthermore, applications that depend on large models (such as Earth observation), even with quantization to reduce their size, can still require large numbers of parallel operations. More compute throughput means the hardware can handle more data or run inference faster, but engineers must also account for memory bandwidth, latency and power efficiency, which all impact performance.

- Power and size constraints: Power efficiency is a key requirement for onboard systems. AI accelerators for space missions must therefore combine high performance with low power consumption. They might also employ additional power-efficiency methods, including duty cycling (powering down or idling the entire chip when not in use) and power gating (cutting off power to parts of the chip not being used). Interestingly, these strategies could also reduce the potential for radiation-induced errors.

- Environmental conditions: The space environment can be both extremely cold and extremely hot. It also involves exposure to high levels of cosmic radiation, which could potentially induce errors in semiconductors, such as single-event upsets (memory bit flips) or, even worse, single-event latchups (which are destructive). Furthermore, the failure modes of these advanced AI chips are vastly more complex than those of classic processors. Because they rely on massively parallel architectures, a radiation-induced error might not cause a simple system crash; instead, it could result in silent data corruption, degraded inferences, or subtle but critical misclassifications. Understanding and mitigating these intricate failure mechanisms requires thorough investigation and specialized testing protocols far beyond standard validation. AI devices will have to deploy mitigation techniques to lower the risk of service outages, especially when targeting safety-critical applications like autonomous landing or docking.

- Supply resilience and long-term availability: For space missions, the reliability of the hardware supply chain is as critical as the performance of the technology itself. Components must remain available and supported over extended lifecycles, often far beyond typical commercial timelines. Crucially, this required longevity extends beyond just the physical hardware; it mandates sustained software support. The complex software stacks, drivers and development tools required to run AI models on specialized silicon must be maintained and updated over missions spanning years or decades. AI hardware selected for space applications should therefore come from suppliers with clear product roadmaps, robust sourcing strategies and measures to mitigate obsolescence. Ensuring resilience and continuity in the supply chain reduces mission risk and guarantees that advanced computing solutions can be maintained and scaled throughout the duration of space programs.

None of these challenges is insurmountable. But to truly unlock the transformative potential of AI in space, we must move beyond innovation in isolation. It’s time for AI hardware developers and space agencies to forge deeper partnerships — co-designing, testing, validating and de-risking silicon solutions that can thrive in the harshest environments known to science.

The blistering pace of AI innovation is largely being driven by companies, often startups, that are developing solutions for commercial applications not optimized for deployment in the space environment. However, agencies such as the European Space Agency have extensive experience in radiation characterization and mitigation techniques that can be advantageously made available to support these startups.

With AI increasingly seen as a strategic asset for nations, investing in European homegrown AI technologies for space applications offers a strong medium- to long-term return on investment. Public-private partnerships are the key to fostering the development of future AI-powered missions.

Laurent Hill is a microelectronics and data handling engineer at the European Space Agency.

Gianluca Furano is a data systems engineer at the European Space Agency.

Livia Manovi is a research fellow at the European Space Agency.

Jean Vieville is director of channel and OEM sales, EMEA for Axelera AI.

SpaceNews is committed to publishing our community’s diverse perspectives. Whether you’re an academic, executive, engineer or even just a concerned citizen of the cosmos, send your arguments and viewpoints to opinion (at) spacenews.com to be considered for publication online or in our next magazine. If you have something to submit, read some of our recent opinion articles and our submission guidelines to get a sense of what we’re looking for. The perspectives shared in these opinion articles are solely those of the authors and do not necessarily represent their employers or professional affiliations.

Stay Informed With the Latest & Most Important News

Previous Post

Next Post

-

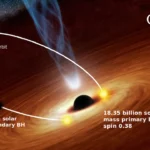

01Two Black Holes Observed Circling Each Other for the First Time

01Two Black Holes Observed Circling Each Other for the First Time -

02From Polymerization-Enabled Folding and Assembly to Chemical Evolution: Key Processes for Emergence of Functional Polymers in the Origin of Life

02From Polymerization-Enabled Folding and Assembly to Chemical Evolution: Key Processes for Emergence of Functional Polymers in the Origin of Life -

03Astronomy 101: From the Sun and Moon to Wormholes and Warp Drive, Key Theories, Discoveries, and Facts about the Universe (The Adams 101 Series)

03Astronomy 101: From the Sun and Moon to Wormholes and Warp Drive, Key Theories, Discoveries, and Facts about the Universe (The Adams 101 Series) -

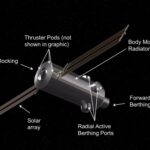

04True Anomaly hires former York Space executive as chief operating officer

04True Anomaly hires former York Space executive as chief operating officer -

05Φsat-2 begins science phase for AI Earth images

05Φsat-2 begins science phase for AI Earth images -

06Hurricane forecasters are losing 3 key satellites ahead of peak storm season − a meteorologist explains why it matters

06Hurricane forecasters are losing 3 key satellites ahead of peak storm season − a meteorologist explains why it matters -

07Binary star systems are complex astronomical objects − a new AI approach could pin down their properties quickly

07Binary star systems are complex astronomical objects − a new AI approach could pin down their properties quickly