Now Reading: Do AI tools undermine trust in geospatial imagery?

-

01

Do AI tools undermine trust in geospatial imagery?

Do AI tools undermine trust in geospatial imagery?

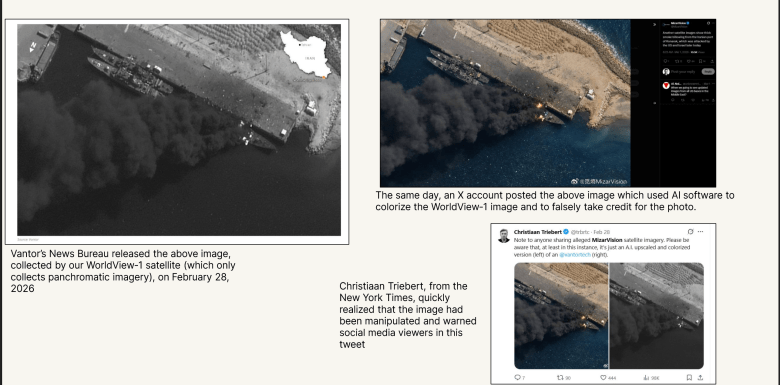

On Feb. 28, the first day of U.S. military strikes on Iran, a Chinese AI startup posted an image on social media site X of a ship burning at Iran’s Konarak Naval Base.

The image bore a striking resemblance to a WorldView-1 image released earlier that day by Vantor’s News Bureau, but the MizarVision image was in color and WorldView-1 only collects in black and white.

“They ran it through an AI program and colorized it,” Stephen Wood, Vantor News Bureau senior director, told SpaceNews. “That was the first time I’ve ever seen somebody take one of our images and digitally alter it without our permission.”

The English-language daily Tehran Times also posted fake satellite imagery appearing to show the destruction of a U.S. radar base in Qatar. Actually, it was an AI-manipulated Google Earth image of U.S. base in Bahrain, according to the Central European Media Digital Observatory.

“In the recent Iran-related events, we’ve already seen AI-generated or AI-altered ‘satellite’ images circulating as public-facing information manipulation,” University of Washington geography professor Bo Zhao said by email. “More importantly, this trajectory is almost inevitable and will only become easier over time — especially as systems like ChatGPT can now generate highly realistic ‘remote sensing’ imagery. This is a qualitative shift from earlier techniques.”

Falsified satellite imagery is a relatively new phenomenon.

“I’m not saying that it’s never occurred, but that hasn’t become a common norm by any means,” said Frank Backes, Space Information Sharing and Analysis Center president and former Capella Space chief executive.

Geospatial industry executives say the wealth of available satellite imagery makes it easier than ever to debunk false claims. Vantor’s News Bureau revealed that news reports of a Jan. 29 militant attack on an airport in Niamey, Niger, featured an image with AI-generated smoke and fires. Vantor knew the airport image was AI-generated because its GeoEye-1 satellite captured imagery of the same airport on the same day.

“We were able to look at features on the ground, compare it to the [online] images, and very readily see that the entire thing had been altered,” Wood said. “In fact, it was not even the right airport. With so much collection available, commercial satellite imagery makes it easy to point out a fake image.”

Customers also turn to Ursa Space Systems to compare multiple sources of imagery and data, including electro-optical, synthetic aperture radar, automatic identification system and open-source intelligence, to determine “what is happening or not happening in an area,” said Adam Maher, Ursa Space Systems chief executive and founder. “Today, because of the commercial availability of data, you can verify imagery with a second source.”

Chain of Custody

Well-known satellite imagery providers go to great pains to maintain credibility. To win government contracts, companies must demonstrate tight control of supply chains, robust cybersecurity practices and careful vetting of prospective customers to comply with national security and export controls regulations.

“When reputable firms that have been in business for years release an image, there’s a much greater confidence that it’s legitimate and it has not been altered,” Wood said. “When a friend of a friend or a media group of a media group starts posting material, that’s where you get much less credible information and the chances that things have been altered goes up exponentially.”

As a result, customers should rely on trusted vendors, said Luke Fischer, SkiFi co-founder and chief executive. “It’s no longer about believing the pixel. It’s about verifying the pipeline.”

Seasoned Earth-observation satellite owners and operators deliver imagery with an extensive metadata package that identifies the sensor, date and time of image capture, precise location pictured and the chain of custody, the entire process from satellite tasking to data delivery.

When that chain of custody is intact, “you know that nobody outside the organization could have touched an image,” Wood said.

To protect the chain of custody, customers are prohibited from reselling imagery purchased from leading vendors or through SkyFi’s geospatial marketplace, which includes imagery and analytics from more than 50 providers.

And while AI can help nation-state actors create disinformation, it also speeds up detection of AI-generated artifacts in images and facilitates comparison of imagery from various providers.

“If a scene claims to be from a specific satellite at a specific time, you can now validate that against the actual two-line element,” which shows a satellite’s location, Fischer said. “There’s definitely a net positive with AI.”

“AI is both the problem and the solution,” said Zhao, lead author of “Deep Fake Geography,” a 2020 study published in the journal Cartography and Geographic Information Science. “We are entering a familiar technological arms race: generation techniques evolve, detection methods follow, and are quickly surpassed.

“We are moving from ‘seeing is believing’ to a much more unstable condition in which seeing no longer guarantees belief. Once synthetic imagery crosses a certain threshold of realism, methods based on visual artifacts, statistical distributions, or frequency analysis will likely become less reliable.”

In fact, countries may be heading toward “a post-truth information environment where the issue is not simply whether an image is real, but how societies negotiate uncertainty and visually mediated evidence,” Zhao said.

If that happens, imagery analysis including technical detection of AI artifacts will need to be combined with tracking the provenance of data “and, critically, public AI literacy,” Zhao said. “Ultimately, this is not just a technical problem, it’s a societal one.”

Stay Informed With the Latest & Most Important News

-

01Two Black Holes Observed Circling Each Other for the First Time

01Two Black Holes Observed Circling Each Other for the First Time -

02From Polymerization-Enabled Folding and Assembly to Chemical Evolution: Key Processes for Emergence of Functional Polymers in the Origin of Life

02From Polymerization-Enabled Folding and Assembly to Chemical Evolution: Key Processes for Emergence of Functional Polymers in the Origin of Life -

03Astronomy 101: From the Sun and Moon to Wormholes and Warp Drive, Key Theories, Discoveries, and Facts about the Universe (The Adams 101 Series)

03Astronomy 101: From the Sun and Moon to Wormholes and Warp Drive, Key Theories, Discoveries, and Facts about the Universe (The Adams 101 Series) -

04True Anomaly hires former York Space executive as chief operating officer

04True Anomaly hires former York Space executive as chief operating officer -

05Φsat-2 begins science phase for AI Earth images

05Φsat-2 begins science phase for AI Earth images -

06Hurricane forecasters are losing 3 key satellites ahead of peak storm season − a meteorologist explains why it matters

06Hurricane forecasters are losing 3 key satellites ahead of peak storm season − a meteorologist explains why it matters -

07Binary star systems are complex astronomical objects − a new AI approach could pin down their properties quickly

07Binary star systems are complex astronomical objects − a new AI approach could pin down their properties quickly